The best part of using LLMs? Finding out you and the AI are equally confused about your code! 😅

While we know that hallucinations with LLMs is definitely a ‘thing’, when applied correctly to the right use case at the right time, they have the potential to work wonders.

In the world of fast-paced software development, where efficiency is as important as innovation, Large Language Models (LLMs) and Generative AI (GenAI) have emerged as groundbreaking tools that can dramatically improve developer productivity. As engineering leaders, it's essential to understand how these technologies can streamline your development workflows, reduce friction, and even inspire creative solutions.

In this article, we'll explore practical use cases for LLMs and GenAI, highlight the best models for each scenario, and provide clear action steps to help you kickstart the integration of these tools into your teams.

1. Code Generation & Autocompletion

The most direct application of LLMs for developers is automating code generation. Models like OpenAI’s Codex or GitHub Copilot can help developers write code faster by suggesting entire blocks of code or completing lines of code as they type. These models are particularly useful for:

Boilerplate code generation: Instead of wasting time on repetitive, standardized code (e.g., initializing objects, setting up APIs, or basic error handling), developers can focus on more complex logic.

Writing tests: Codex and similar models can help generate unit and integration tests based on the existing code, saving significant time during development.

Framework-specific tasks: For instance, if your team is using frameworks like Django or React, Codex can assist in setting up project scaffolding, routing, or component creation.

Start by integrating GitHub Copilot into your IDE (such as VS Code) for individual developers. Encourage your team to experiment with it for both small tasks and larger-scale code generation. Evaluate which tasks are most effectively supported by the AI.

2. Code Review & Error Detection

LLMs can act as virtual code reviewers. Models like DeepCode (now part of Snyk) and Tabnine are excellent at identifying potential bugs, security vulnerabilities, and inefficient code patterns. For example, DeepCode uses machine learning to scan your codebase for patterns that might cause issues in production, while also suggesting fixes.

Use cases include:

Automating static code analysis: LLMs can analyze pull requests and flag potential issues in real time, ensuring that code reviews catch subtle bugs or performance bottlenecks.

Suggesting performance improvements: Some LLMs can recognize inefficient algorithms or data structures and propose alternatives that optimize performance or reduce memory usage.

Integrate these tools into your CI/CD pipeline to provide real-time code quality feedback. Start with small projects to evaluate how effectively the LLMs catch errors, and then scale it across larger projects.

3. Documentation Assistance

Writing and maintaining documentation is often seen as a chore by developers, but it’s critical for long-term project success. LLMs like GPT-4 or Claude by Anthropic can assist in automating documentation creation, making it easier to maintain and keep up to date.

Practical applications include:

Generating API documentation: Given code annotations or a list of endpoints, LLMs can generate comprehensive documentation, including request/response examples.

Commenting code: Developers can use LLMs to automatically generate meaningful comments in their code, improving readability and maintainability.

Summarizing complex codebases: LLMs can parse through large codebases and generate summaries or overviews, helping new team members onboard faster.

Use GPT-4 or Claude to draft API docs and code comments, then have developers review and refine them. This will reduce the time spent on documentation while maintaining quality.

4. Debugging Assistance

Debugging is often the most time-consuming part of software development. LLMs can help developers quickly identify the root cause of a bug by analyzing logs, stack traces, or code snippets. Models like CodeT5 and StarCoder excel at understanding the context of a bug and can suggest solutions.

Potential use cases:

Log analysis: If your team is overwhelmed by complex logs during debugging sessions, LLMs can help interpret these logs, identify error patterns, and even suggest fixes.

Hypothesis generation: By reviewing the code and output, LLMs can propose potential causes for bugs or even identify edge cases that may not be immediately obvious.

Have your team start using LLMs like CodeT5 when faced with difficult bugs. Encourage them to input error logs or problematic code snippets to get a fresh perspective on potential causes and fixes.

5. Learning & Skill Development

LLMs can serve as personal coding mentors for your team, helping them quickly learn new languages, frameworks, or design patterns. Models like GPT-4 and Claude can answer questions about syntax, explain concepts, or even walk through complex programming patterns.

Potential use cases:

Language and framework guidance: If a developer is new to a language or framework, an LLM can provide explanations, examples, and best practices for efficient coding.

Design pattern recommendations: When working on a complex problem, an LLM can suggest relevant design patterns or architectures to ensure scalable solutions.

Encourage your developers to use LLMs for skill development. Integrate AI-powered learning into your team’s workflow by prompting them to ask models questions when they get stuck or want to explore new tools and libraries.

6. Automated Refactoring

Refactoring large codebases is essential to maintaining code quality but can be tedious and error-prone. LLMs such as Codex can automate parts of the refactoring process by:

Simplifying complex methods: LLMs can suggest how to break down long or convoluted functions into smaller, more manageable ones.

Optimizing code for performance: Models can identify inefficient code and suggest optimizations, such as replacing nested loops with more efficient algorithms.

Use an LLM to refactor legacy codebases incrementally. Start with smaller methods or functions and allow the model to propose simplifications. Always review and test refactored code thoroughly before pushing it to production.

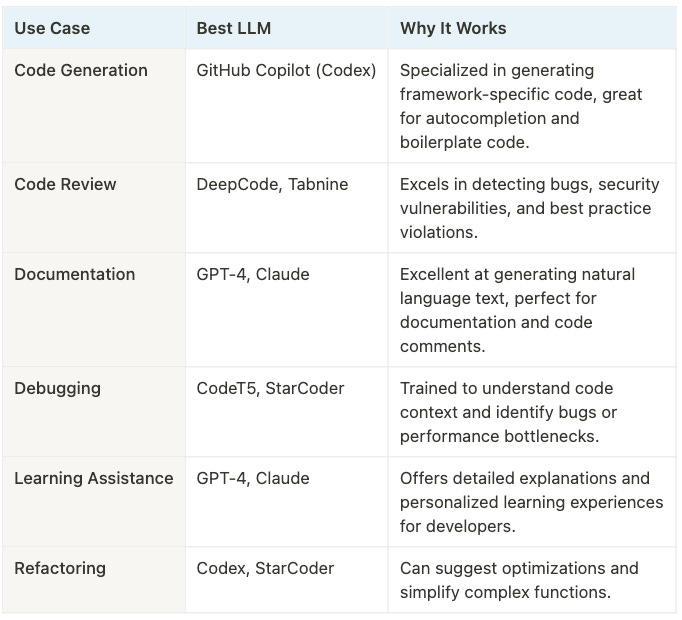

Best LLMs for Specific Use Cases

Getting Started: Practical Steps

Pilot Implementation: Choose one or two projects where you think LLMs could have the most immediate impact. Start with small-scale integrations, such as using GitHub Copilot for boilerplate code generation or DeepCode for code review.

Educate Your Team: Organize internal workshops or training sessions to introduce your developers to these tools. Make sure they understand how to use LLMs effectively and responsibly.

Integrate with Existing Tools: Look for opportunities to seamlessly integrate LLMs with your existing development environment, whether through IDE plugins, CI/CD pipelines, or documentation generators.

Monitor and Iterate: Track how the use of LLMs affects productivity, code quality, and developer satisfaction. Gather feedback from your team and adjust the tools you’re using based on what works best for your organization.

Conclusion

LLMs and GenAI are transforming the way developers work, automating mundane tasks and enhancing creativity. By carefully selecting the right tools and integrating them into your workflow, you can dramatically improve your team's productivity and focus on building innovative solutions. Start small, iterate, and let the AI handle the repetitive work while your team focuses on what really matters—delivering quality software faster.

Feel free to let us know in comments on how have you been leveraging LLMs for your teams & how effective they have been!

Credits 🙏

Writers- Cheers to our guest writer, Kshitij Mohan

Sponsors- Thanks to our sponsors Typo AI - Ship reliable software faster

Loving our content? Consider subscribing & get weekly Bytes right into your inbox👇

If you’re pleased with our posts, please share them with fellow Engineering Leaders! Your support helps empower and grow our groCTO community.